5.1 KiB

Result Report November

1. TODOs

- Compare autoregressive vs non-autoregressive

- Add more input parameters (load forecast)

- Quantile Regression sampling fix

- Quantile Regression exploration

2. Autoregressive vs Non-Autoregressive

Training data: 2015 - 2022

Batch_size: 1024

Learning_rate: 0.0003

Early_stopping: 10

2.1 Linear Model

Comparison: Link

| Autoregressive | Non-Autoregressive | |

|---|---|---|

| Experiment (ClearML) | Link | Link |

| Train-MAE | 68.0202865600586 | 94.83179473876953 |

| Train-MSE | 7861.2197265625 | 15977.8759765625 |

| Test-MAE | 78.05316925048828 | 104.11575317382812 |

| Test-MSE | 10882.755859375 | 21145.583984375 |

2.2 Non Linear Model

Hidden layers: 1

Hidden units: 512

Comparison: Link

| Autoregressive | Non-Autoregressive | |

|---|---|---|

| Experiment (ClearML) | Link | Link |

| Train-MAE | 66.78179931640625 | 94.52633666992188 |

| Train-MSE | 7507.53955078125 | 15835.671875 |

| Test-MAE | 77.63729095458984 | 104.07229614257812 |

| Test-MSE | 10789.544921875 | 21090.9765625 |

Also tried with 3 hidden layers for Non-Autoregressive model, test results didn't improve.

3. Quantile Regression

3.1 Sampling Fix

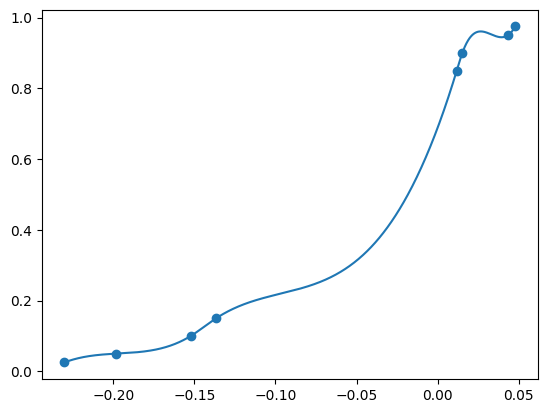

The model outputs values for the quantiles it is trained on. For example, the quantiles [0.025, 0.05, 0.1, 0.15, 0.85, 0.9, 0.95, 0.975] can be used. The values outputted by the model are like: [-0.23013, -0.19831, -0.15217, -0.13654, 0.011687, 0.015129, 0.043187, 0.047704]. Plotting these as CDF:

Plotted output values with cubic interpolation

Samling from a uniform distribution, we can convert it to our distribution by using the inverse CDF. In python we can interpolate immediately by switching the x and y axis. This gives us the following code:

interp1d(quantiles, output_values, kind='quadratic', bounds_error=False, fill_value="extrapolate")

The mean of x amount of samples can be calculated.

3.2 Exploration

3.2.1 Linear Model

Learning Rate: 0.0003

Batch Size: 1024

Early Stopping: 10

Trining Data: 2015 - 2022

| Quantiles | Train-MAE | Train-MSE | Test-MAE | Test-MSE |

|---|---|---|---|---|

| 0.025, 0.1, 0.2, 0.3, 0.5, 0.6, 0.8, 0.85, 0.9, 0.975 | 68.07254628777868 | 7872.668472187121 | 78.11135584669907 | 10903.793883789216 |

| 0.025, 0.1, 0.15, 0.2, 0.5, 0.8, 0.85, 0.9, 0.975 | 68.0732244289865 | 7873.212834241974 | 78.1143230666738 | 10907.350919114313 |

| 0.025, 0.05, 0.1, 0.15, 0.85, 0.9, 0.95, 0.975 | 68.2798014428824 | 7936.7644114273935 | 78.50109206637464 | 11005.706457116454 |

| 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9 | 68.06139224378171 | 7871.467571921973 | 78.10843456751378 | 10904.55519059502 |

| 0.1, 0.15, 0.2, 0.25, 0.3, 0.35, 0.4, 0.45, 0.5, 0.55, 0.6, 0.65, 0.7, 0.75, 0.8, 0.85, 0.9 | 68.0350635204691 | 7868.661882749334 | 78.1139478264609 | 10905.562798801011 |

| 0.1, 0.2, 0.3, 0.35, 0.4, 0.45, 0.5, 0.55, 0.6, 0.65, 0.7, 0.8, 0.9 | 68.03191172170285 | 7868.483061240721 | 78.13204232055722 | 10908.837301340453 |

These are all very close

3.2.2 Non Linear Model

Learning Rate: 0.0003

Batch Size: 1024

Early Stopping: 10

Trining Data: 2015 - 2022

Hidden Layers: 3

Hidden Units: 1024

| Quantiles | Train-MAE | Train-MSE | Test-MAE | Test-MSE |

|---|---|---|---|---|

| 0.1, 0.2, 0.3, 0.35, 0.4, 0.45, 0.5, 0.55, 0.6, 0.65, 0.7, 0.8, 0.9 | 65.6542529596313 | 7392.5142575554955 | 77.55779692831604 | 10769.161724849037 |

| 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9 | 65.68495924348356 | 7326.2239225611975 | 77.62433888969542 | 10789.003223366473 |